Browse, then drill in

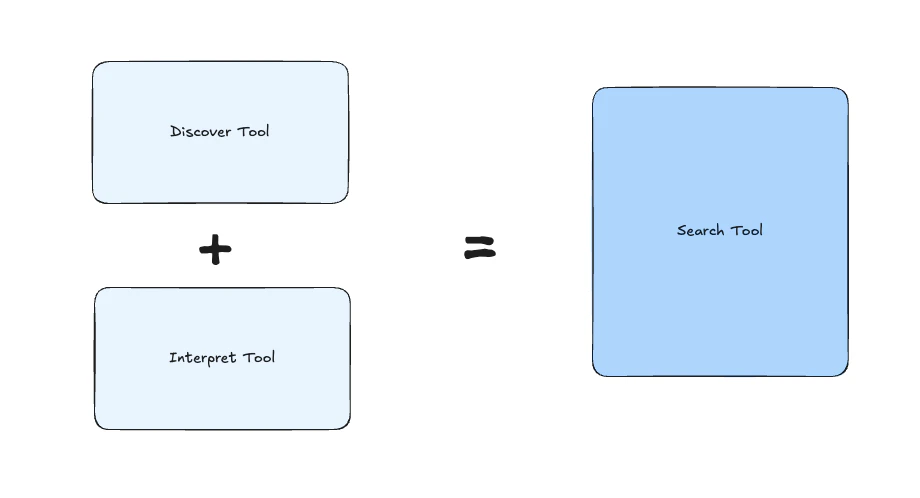

discover → interpretTwo calls. First a ranked menu of structural pointers, then a synthesized brief on the items the agent picks. Use when the agent needs to pick before going deep.Direct answer

searchOne call. A synthesized natural-language answer over the graph. Use when the question is self-contained and the agent doesn’t need to pick.

Flow 1 — Browse, then drill in

discover — the menu

Returns a ranked list of structural pointers (entity paths, with scores) that match the query. No prose. The cheapest way to see “what does the graph have on this topic?”

interpret — the brief

Takes one or more canonical_ids from a discover response and returns a 1–2 paragraph brief grounded in the actual underlying source. Use it when the agent has decided which items matter and wants the synthesized take on those specific ones.

discover and needs the why/what, not the where.

Flow 2 — Direct answer

search — the answer

Synthesized natural-language answer over the graph. The heaviest call — but the right one when the question is self-contained and the agent doesn’t need to pick.

Picking the right flow

| Situation | Flow | Calls |

|---|---|---|

| ”What does the graph have on X?” | Browse | discover |

| ”Show me more like this” | Browse | discover (window-aware skips what was already returned) |

| Agent picked 2–3 items and wants the synthesized take | Browse | interpret |

| Hover cards, typeahead, live previews | Browse | discover |

| User asked a self-contained question | Direct | search |

| End-of-thread synthesis (“write this up”) | Direct | search |

| Need to budget tokens | Browse | start with discover, escalate only if needed |

| Always the same document | — | skip retrieval, read the source directly |

Composition pattern

A typical multi-turn flow looks like this:Memory across turns

Every call is window-aware. Pass conversation history and the system pushes down an exclusion: items the agent has already received don’t get redelivered. Sodiscover on turn 2 reaches deeper than turn 1 — automatically.

See Window-Aware Retrieval for the mechanics and the edge cases.

Where these calls live

Every call hits the same backend. You can invoke them from:- CLI —

copass discover|interpret|search(with--jsonfor scripting). See Copass CLI. - MCP — exposed as tools to any MCP client (Claude Code, Cursor, Claude Desktop). See MCP Server.

- SDK —

client.matrix.{discover,interpret,search}from@copass/core. See SDK. - Inside an agent — the Agent Router gives the agent these as tools automatically when a sandbox is attached.

Next steps

- Sandboxes — the tenancy model every retrieval call is scoped against.

- Progressive Disclosure — first-principles framing of why retrieval is a gradient.

- Window-Aware Retrieval — how memory shapes each call.

- Agent Router — the runtime that puts these calls in the agent’s hands.